Summary

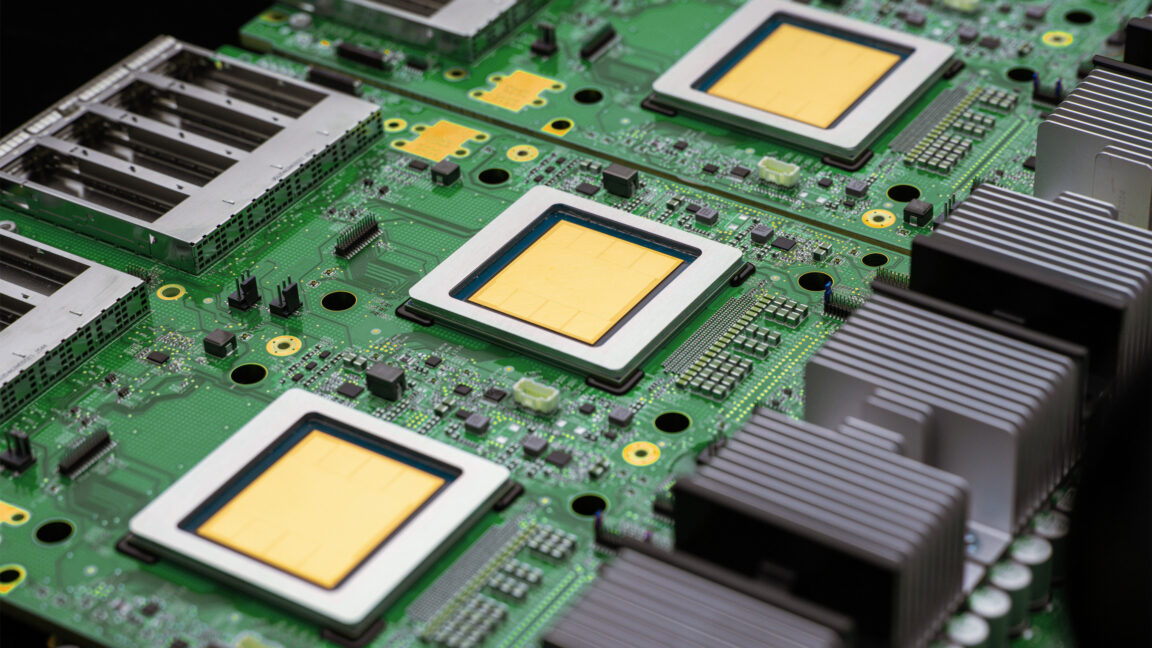

Google has officially introduced its eighth generation of custom AI chips, known as Tensor Processing Units (TPUs). These new processors are specifically built to handle the next phase of artificial intelligence, which Google calls the "agentic era." Unlike previous versions, this generation is split into two distinct models: one designed for training large AI systems and another for running them efficiently. This move helps Google stay competitive as more companies look for faster and cheaper ways to build advanced digital tools.

Main Impact

The release of the TPU 8 series marks a major shift in how AI hardware is built. By creating specialized chips for different tasks, Google is making it possible to develop massive AI models in a fraction of the time it used to take. The biggest impact will be felt by developers and businesses that use Google Cloud, as they can now move from the design phase to a finished product much faster. This change supports the rise of AI "agents"—programs that can perform complex tasks on their own rather than just answering simple questions.

Key Details

What Happened

Google announced two new versions of its eighth-generation AI hardware: the TPU 8t and the TPU 8i. The "t" in TPU 8t stands for training. This chip is the workhorse used to teach an AI model how to understand language, recognize images, or solve problems. The "i" in TPU 8i stands for inference. This chip is used once the AI is already trained and needs to respond to user requests in real-time. By splitting the hardware this way, Google can optimize each chip for its specific job, leading to better performance and lower energy use.

Important Numbers and Facts

This announcement comes just one year after Google released its seventh-generation chip, called Ironwood, in 2025. The rapid pace of these releases shows how quickly the industry is moving. Google claims that the new TPU 8t can reduce the time needed to train the most advanced AI models from several months down to just a few weeks. This is a massive improvement for researchers who previously had to wait a long time to see if their AI designs actually worked. The new chips are also designed to work together in massive groups, allowing thousands of them to function as one giant supercomputer.

Background and Context

To understand why these chips matter, it helps to look at the rest of the tech world. Most companies that build AI, like OpenAI or Meta, rely heavily on chips made by Nvidia. These chips are very powerful but can be hard to get and very expensive. Google decided years ago to build its own chips to avoid relying on other companies. These custom chips, the TPUs, are only available through Google’s own cloud services.

The "agentic era" mentioned by Google refers to a new trend where AI acts as an assistant that can complete multi-step goals. For example, instead of just writing an email, an AI agent might look at your calendar, book a flight, and then send the confirmation to your boss. Doing this requires much more computing power and faster response times than simple chatbots, which is why Google felt new hardware was necessary.

Public or Industry Reaction

Industry experts see this as a direct challenge to Nvidia’s dominance in the AI market. While Nvidia still holds the largest share of the market, Google’s ability to build its own hardware gives it a unique advantage in terms of cost and speed. Cloud customers are likely to welcome the new chips because they offer a way to run AI programs more efficiently. However, some analysts point out that because these chips are exclusive to Google Cloud, they may limit where developers can build their software. Despite this, the promise of cutting training time from months to weeks is a strong draw for the world's largest tech firms.

What This Means Going Forward

The introduction of the TPU 8 series suggests that the race for AI hardware is only getting faster. We can expect to see AI models become much more capable in a shorter amount of time. As training becomes faster and cheaper, more companies will likely try to build their own custom AI systems. This could lead to a surge in specialized AI tools for medicine, law, and engineering. For everyday users, this means that the AI tools they use will likely become faster and more reliable as the "agentic" features begin to roll out in common apps and websites.

Final Take

Google is doubling down on its strategy of building its own tools from the ground up. By focusing on the specific needs of AI agents, the company is positioning itself as the primary destination for the next wave of digital innovation. The split between training and inference chips shows a deep understanding of the technical hurdles facing AI today. If these chips perform as well as promised, the speed of AI development is about to hit a new gear.

Frequently Asked Questions

What is the difference between TPU 8t and TPU 8i?

The TPU 8t is built for training AI models, which is the process of teaching the AI using large amounts of data. The TPU 8i is built for inference, which is the process of the AI actually answering questions or performing tasks for a user.

Why does Google build its own AI chips?

Google builds its own chips to save money and to make sure the hardware is perfectly suited for its specific software. It also helps them avoid the long wait times and high costs associated with buying chips from other companies like Nvidia.

What is an AI agent?

An AI agent is a type of artificial intelligence that can work independently to achieve a goal. Unlike a simple chatbot that just talks, an agent can use tools, browse the web, and complete tasks like booking a hotel or organizing a project without constant human help.